Matrix Addition and Subtraction

Definition 1. You can add and subtract matrices of the same shape.

Let A and B be r × c matrices with A = [aij] and B = [bij]. Then A + B is an r × c matrix with A + B = [aij + bij] and A – B is an r × c matrix with A – B = [aij – bij].

Operations between a Matrix and a Scalar

Definition 2: A matrix can be multiplied (or divided) by a scalar. A scalar can also be added to (or subtracted from) a matrix.

Let A be an r × c matrix with A = [aij] and let b be a scalar. Then bA and A + b are r × c matrices where bA = [b · aij] and A + b = [aij + b]. We can define Ab and b + A in a similar fashion. Clearly, b + A = A + b and Ab = bA. Division and subtraction of matrices by scalars can be defined similarly.

Matrix Multiplication

Definition 3: You can multiply two matrices, but only if they have compatible shapes.

Let A be a p × m matrix with A = [aij], and let B be an m × n matrix with B = [bjk]. Then AB is a p × n matrix with AB = [cik] where

Observation: For the multiplication AB to be valid, the number of columns in A must equal the number of rows in B. The resulting matrix will have the same number of rows as A and the same number of columns as B.

The associative law holds, namely (AB)C = A(BC), i.e. it doesn’t matter whether you multiply A by B and then multiply the result by C or first multiply B by C and then multiply A by the result. It is essential that the matrices have a compatible shape. Thus if A is p × m, B is m × n and C is n × s then ABC will have the shape p × s. The distributive laws, namely A(B + C) = AB + BC and (A + B)C = AC + BC, also hold.

The commutative law of addition holds, namely A + B = B + A, but the commutative law of multiplication does not hold even when the matrices have a suitable shape; thus, even for two n x n matrices A and B, AB is not necessarily equal to BA. For square matrices, the trace of AB is equal to the trace of BA though.

Trace and Matrix Operations

Property 0: For square matrices A and B of the same size and shape and scalar c:

- Trace(A+B) = Trace(B+A)

- Trace(cA) = c Trace(A)

- Trace(AB) = Trace(BA)

Proof: The proofs are straightforward, based on the definition of trace and matrix addition and multiplication.

Transpose of a Matrix

Definition 4: The transpose of an r × c matrix A = [aij] is the c × r matrix AT = [aji].

A (square) matrix A is symmetric if A = AT

Property 1:

- (AT)T = A

- (AB)T = BTAT

- If A and B are symmetric and AB = BA then AB is symmetric

- Trace(A) = Trace(AT)

Proof: We prove (c). Assume that A and B are symmetric. By Definition 4 and Property 1b, AB = ATBT = (BA)T = (AB)T

Observation: If A is a column vector then ATA is a scalar. In fact, ATA = ‖A‖2. Thus, a column vector A is a unit vector if and only if ATA = 1.

Inverse of a Matrix

Definition 5: An n × n matrix A is invertible (also called non-singular) if there is a matrix B such that AB = BA = In (where In is the n × n identity matrix). A–1 is the inverse of A provided AA–1 = A–1A = In. A matrix that is not invertible is called singular.

Property 2: If A is invertible, then its inverse is unique.

Proof: Suppose B and C are inverses of A. Then by the associative law, C = IC = (BA)C = B(AC) = BI = B, and so C = B.

Observation: In fact, if there is a matrix B such that AB = In or BA = In then A is invertible and A–1 = B.

Property 3: If A and B are invertible, then (A–1)–1 = A and (AB)–1 = B–1 A–1

Proof: The first assertion results from the first assertion of Property 2.

Since (AB)(B–1A–1) = A(BB-1)A–1,= AIA–1 = AA–1 = I the second assertion follows from the second assertion of Property 2.

Property 4: If A is invertible, then so is its transpose and (AT)-1 = (A-1)T

Proof: By Property 1b, AT(A-1)T = (A-1A)T = IT = I. Similarly, (A-1)TAT = (AA-1)T = IT = I.

Property 5: A is symmetric if and only if A-1 is also symmetric

Proof: Assume A is symmetric, then by Property 4, (A-1)T = (AT)-1 = A-1, and so A-1 is also symmetric. For the converse, assume that A-1 is symmetric, then from the above, it follows that (A-1)-1 is symmetric, but by Property 3, this means that A is symmetric.

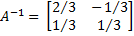

Example 1: Find the inverse of

Since the inverse of A takes the form

where AA–1 = I2, it follows that

Thus we need to solve the following four linear equations in four unknowns:

![]()

![]()

![]()

Solving these equations yields a = 2/3, b = -1/3, c = 1/3, d = 1/3, and so it follows that

Excel Functions

Excel Functions

Excel Functions: Excel provides the following array functions to carry out the various matrix operations described above (where we conflate the matrices A and B with the arrays on an Excel worksheet that contain these arrays).

MMULT(A, B): If A is a p × m array and B is an m × n array, then MMULT(A, B) = the p × n matrix AB.

MINVERSE(A): If A is an n × n square array, then MINVERSE(A) = A–1.

TRANSPOSE(A): If A is an m × n array, then TRANSPOSE(A) = AT.

Note that since these are array functions, most Excel users can’t simply press Enter when using these functions. E.g. for MMULT, you must first highlight a p × n range before entering =MMULT(A,B) and then you must press Ctrl-Shft-Enter. This is not necessary for Excel 2021 and Excel 365 users where MMULT can be treated as a dynamic array function.

Versions of Excel starting with Excel 2013 also provide the array function MUNIT(n) which returns the n × n identity matrix.

You can also transpose an array A in Excel by copying the array (i.e. by highlighting the array and pressing Ctrl-C), clicking where you want AT located (i.e. the cell at the upper left corner of AT), and then selecting Home > Clipboard|Paste and choosing the Transpose option.

Using the +, -, *, /, and ^ operators with matrices

In addition, if A and B are defined as arrays (e.g. they are named arrays or entities such as B5:F8 or they are the results of matrix operations such as TRANSPOSE, INVERSE, or MMULT, then they can be manipulated using the +, -, *, / and ^ operators. These operations are done on a cell-by-cell basis.

For example, suppose range B2:C3 contains

If you highlight range D7:E8, enter =2*B2:C3+TRANSPOSE(B2:C3) and then press Ctrl-Shft-Enter, D7:E8 will contain

Note that D7:E8 must have the same shape as B2:C3 or an error will result. The following also holds.

Note too that if A is an m × n matrix and B is a 1 × n matrix (i.e. a row vector) then A + B is a valid operation in Excel and gives the same result as A + C where C is an m × n matrix all of whose rows contain the same data as B. Similarly you can calculate A – B, A*B and A/B. Also, you can calculate A + B, A – B, A*B, and A/B where B is an m × 1 column vector.

E.g. Suppose B2:C3 contains and E2:E3 contains

. If you highlight F4:G5, enter =B2:C3–E2:E3 and then press Crtl-Shft-Enter, F4:G5 will contain

.

Matrix products, differences, and power

Real Statistics Functions: The Real Statistics Resource Pack supplies the following functions:

MPOWER(A,n) = An

MSUB(A,B) = A – B

MPROD(A,B,C,D) = ABCD

Note that for MSUB, arrays A and B must have the same size and shape. Also, for any element in A and/or B that is not numeric, the corresponding element in the output will be blank.

MPROD can be used with 2, 3, or 4 compatible arrays. The function MPROD(A,B) is equivalent to MMULT(A,B). MPROD(A,B,C) is equivalent to MMULT(MMULT(A,B),C). MPROD(A,B,C,D) is equivalent to MMULT(MMULT(A,B),MMULT(C,D)).

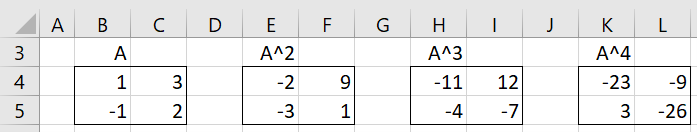

Example 2: Find A4 where A is the 2 × 2 matrix shown in range B4:C5 of Figure 2.

Figure 2 – MPOWER

The result is shown in range K4:L5. You can obtain the same result via the array formula =MMULT(H4:I5,B4:C5) or =MMULT(B4:C5,MMULT(B4:C5,MMULT(B4:C5,B4:C5))). Alternatively, you can use the array formula =MPOWER(B4:C5,4).

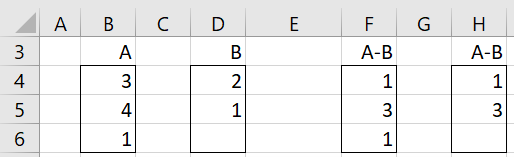

Example 3: Find A-B for the arrays shown in Figure 3.

Figure 3 – MSUB

Using the Excel array formula =B4:B6-D4:D6, we obtain the result shown in range F4:F6. Here the blank in cell D6 is treated as a zero. If cell D6 had contained any other non-numeric cell, then cell F6 would contain an error value. Using the Real Statistics formula =MSUB(B4:B6,D4,D6), we instead obtain the result shown in range H4:H6, where any non-numeric cell is treated as a blank.

Independence

Definition 6: Vectors X1, …, Xk of the same size and shape are independent if for any scalar values b1, … bk, if b1 X1 +⋯+ bk Xk = 0, then b1 = … = bk = 0.

Vectors X1, …, Xk are dependent if they are not independent, i.e. there are scalars b1, … bk, at least one of which is non-zero, such that b1 X1 +⋯+ bk Xk = 0.

Observation: If X1, …, Xk are independent, then Xj ≠ 0 for all j.

Property 6: X1, …, Xk are dependent if and only if at least one of the vectors can be expressed as a linear combination of the others.

Proof: Suppose X1, …, Xk are dependent. Then there are scalars b1, … bk, at least one of which is non-zero such that b1 X1 +⋯+ bk Xk = 0. Say bi ≠ 0. Then

Now suppose that Xi = . Then b1 X1 +⋯ + bk Xk = 0, where bi = -1, and so X1, …, Xk are dependent.

Dot Product

Definition 7: The dot product of two vectors X = [xi] and Y = [yj] of the same shape is defined to be the scalar

If X and Y are n × 1 column vectors, then X∙Y = XTY = YTX. Also ||X|| = √X·X.

Excel Function: If R1 is an array containing the data in X and R2 is an array containing the data in Y then the dot product X · Y = SUMPRODUCT(R1, R2).

Definition 8: Two non-null vectors of the same shape are orthogonal if their dot product is 0.

Real Statistics Tool

Click here for a description of Real Statistics’ Matrix Operations data analysis tool.

Examples Workbook

Click here to download the Excel workbook with the examples described on this webpage.

References

Cliff Notes (2021) Operations with matrices

https://www.cliffsnotes.com/study-guides/algebra/linear-algebra/matrix-algebra/operations-with-matrices

Better Solutions (2021) Matrix functions

https://bettersolutions.com/excel/functions/matrix-category.htm

Excel Tip (2025) Matrix properties and matrix calculations in Excel

https://www.exceltip.com/tips/matrix-properties-and-matrix-calculations-in-excel.html

Hi Charles,

This is about finding inverse of matrix A using excel solver.

As depicted in demo, to get the inverse of matrix A, one need to solve four linear equations in four unknowns, with constraint of SSE=0 (this is based on, similar to, demonstration on usage of excel solver, also checked in Multistart). As the inverse matrix is provided in dem0, I have set in initial values close to the answer (values initial set were a=0.5, b=-0.2, c=0.2 and d=0.2) and tried to solve using solver to get exact values as provided in demonstration. However, I am getting different values, with error “Solver could not find a feasible solution”. Please suggest.

Thanks!

From your comment, I understand that you are trying to find the inverse to a 4 x 4 matrix using Solver based on some demo. Without more information about the demo or how you set up Solver, it is difficult for me to address your comment. Since you have 4 linear equations in 4 unknowns, you have to initialize more than four values (a, b, c, d).

Can you send me an Excel file with your data and any formulas used by Solver?

Charles

Do you know how to use Excel’s Matrix formulas on a horizontal vector of values, interpreted as an array. For example a 3 x 3 matrix listed in a spreadsheet on 1 horizontal range of 9 values:

1 2 3 4 5 6 7 8 9

How can I take the determinant of this matrix using mDETERM?

Thanks.

Hello Gerald,

Assuming that the horizontal range is located at A1:I1, you can use the following array formula:

=MDETERM(RESHAPE(TRANSPOSE(A1:I1),””,3,3))

This approach uses the Real Statistics array formula RESHAPE.

Charles

Charles, disregard my last. I deselected the Add-in and then reelected it, and now the functions work. The ctrl-m works also. Awesome!! Thanks.

Charles, I am excited to use your Resource Pack. Specifically, I am working with eigenvalues and eigenvectors. I have downloaded the Add-In and it is showing up in my Excel Add-ins on the Developer tab. I am using Excel 2016. The eVALUES(R1,iter,order), eVECTOR(R1,iter,order), and eVECT(R1) are just showing the #NAME?. I did a ctrl-m as shown on your Matrix Operations page and did not get the Matrix Operations pop-up to make sure that Eigenvalues/vector or Eigenpairs options were selected.

Do I need to do anything to activate Resource Pack? Should the functions just start working?

Hi Charles,

I am trying to complete example 2 on this page and am running into troubles. I am running excel 2011 on my mac and when i type VER(), I get the response 5.0 excel mac. However, when I try to use example 2, I get the error ‘compile error in hidden module: Matrix’. I also get this same error when I am trying to use the evectors command. Do you know how to troubleshoot this error?

Thanks

can i get a idea how to calculate via row operation method?

Dave,

Sorry, but I don’t understand your question. What is the row operation method?

Charles

Hi Charles,

I’m not sure how you arrive at the a + c, b + d, -a + 2c, and -b + 2d figures for the inverse matrix. I’m looking at the algebraic expression you wrote to arrive at it but the light bulb isn’t turning on, sorry.

I know I can plug those in when determining one for a 2×2 matrix but it’d be nice to know in case I need to find the inverse of a larger one.

Jonathan,

I just used matrix multiplication and the fact that the product of the two 2×2 matrices must be the 2×2 identity matrix, which means that the values on the main diagonal are 1’s and the values off this diagonal are 0’s.

Charles

Hi Charles,

Question:

What is I2? You mention it in example 1 but I’m not clear what it means or how you arrived at the [1, 0, 0, 1] figures.

Thank you.

Jonathan,

I_2 is the 2 x 2 identity matrix.

Charles

Dear Charles:

Thanks for your program! it has been very useful. I have a question. When I use the matrix operations eigenvalues/eigenvectors on the same matrix of your example:

3 -4 9

5 2 3

7 1 6

I get the following result:

Eigenvalues/vectors (QR Factorization)

11,72209274 -1,651750232 0,929657496

0,511963902 -0,38469524 -0,768051128

0,482584475 -0,610875362 0,627649199

0,71063717 0,691983283 0,127098183

1,79176E-12 6,03212E-12 3,84593E-12

1,06581E-14 6,881844823 4,104277377

the first row 1×2 is the eigenvalues, next is the 3×3 matrix of eigenvectors, but next there are 2 new 1×3 rows, what are they?

Thanks a lot!

See the following webpages

https://real-statistics.com/linear-algebra-matrix-topics/eigenvalues-eigenvectors/

https://real-statistics.com/linear-algebra-matrix-topics/eigenvectors-for-non-symmetric-matrices/

Charles

Thanks Charles!

the matrix operation doesn’t work on mac!

Fran,

This is the first time I have heard of this. What exactly doesn’t work?

Charles

Good Article

When I try to create the output from Matrix Operations I get the error “Compile error in hidden module: frmMatrix”. The Addin is saved in my Downloads folder but it opens, it just doesn’t compute. I do not have Admin authority and frequently IM blocks access to certain functions / executions. Am I missing something or could this function be blocked?

You need to install the software so that Excel recognizes it as an addin. The instructions for doing this are described on the Download webpage.

Charles